Gibson Environment

Gibson Environment stands for Gibson Environment for Real-World Perception Learning. There are two main components in our environment for real-world perception learning: Gibson simulation platform and Gibson real model database.

Multimodal Real World Environment

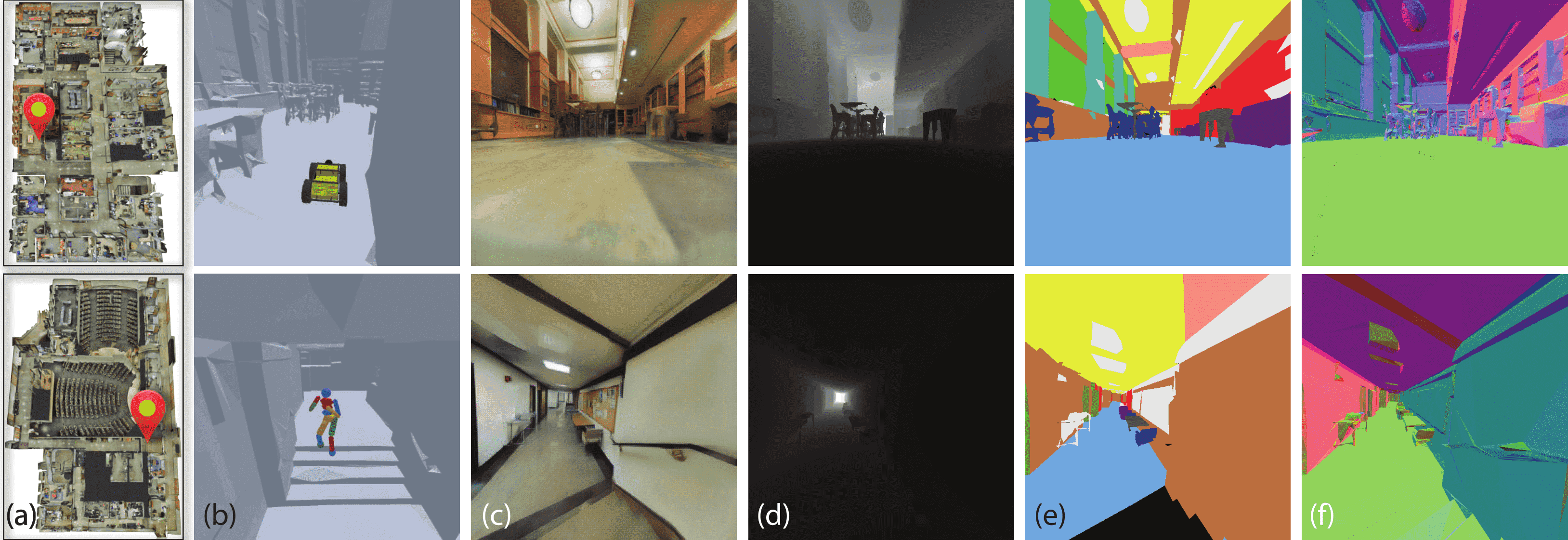

We provide RGB from point clouds, as well as multimodal sensor data: surface normal, depth, and semantics. The environment is also RL ready and can be wrapped as an OpenAI gym environment. We provide two different modes for running simulation: human mode and headless mode, for debugging/visualzation and training purposes respectively.

Robotic Agents

Environments

Defined Tasks

Integrated Physics Engine

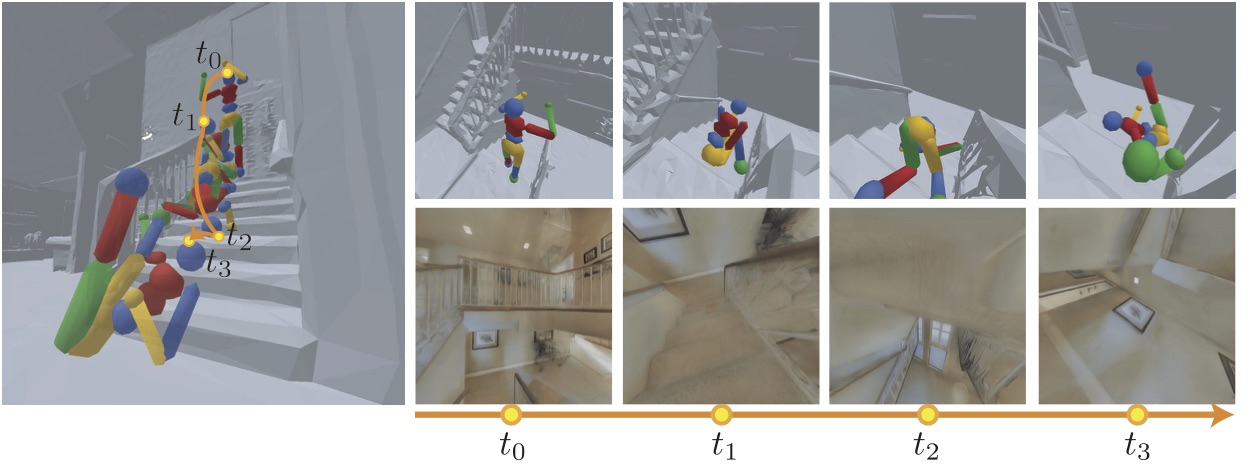

We integrate our environment with physical engine. This allows us to expose our agents to physical phenomena such as collision, gravity, friction, etc. We base our physical simulator on Bullet Physics Engine, which supports rigid body and soft body simulation with discrete and continuous collision detection.

We also provide a selection of different agents. Currently we support: humanoid, ant, drone and quadruped.

Optimized for Speed

Rendering speed is crucial for reinforcement learning. It is our guideline when building an environment to providevery high frame rate, such that we can simulate our envi-ronment at much faster speed than real time. We implemented our CUDA-optimized rendering pipeline for this purpose. We also offer different rendering resolutions, to account for different speed requirements.

Platform Specifications

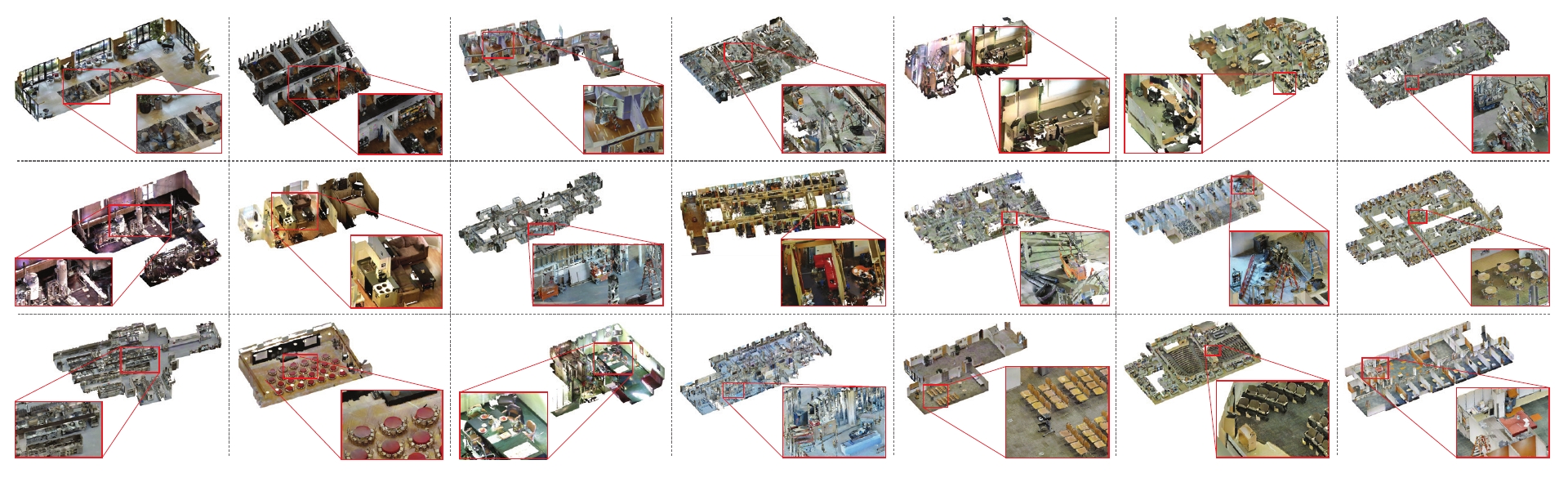

Real-world Complexity

Our images and models are sampled from real environments (3D scanning), which offers complexity of real-world environments. Compared to House3D dataset, ours offers 160% higher navigation complexity and 72.9% higher surface complexity.

Highly Optimized

Our environment renders at higher speed than real time. This ensures that we can train agents efficiently inside our simulation, which offers advantage over training in real world.

RL Ready

Our environment is RL ready and compatible with OpenAI gym. This means that you can deploy and start training your agents in no time. We also provide sample code for training agents, and pretrained results.

3D Models

Our 3D model dataset are collected from indoor environment, powered by the fast-advancing 3D reconstruction and scanning technology. Using such datasets can further narrow down the discrepency between virtual environment and real world. For each scene, we provide: RGB, depth, surface normal, and for a fraction of the spaces, semantic object annotations.

We have the largest state-of-the-art 3D model set. You can use the following browser to browse the models

- All

- House

- Venues

- Others